There are 2 choices for the alternative java (providing /usr/bin/java ).Ġ /usr/lib/jvm/java-11-openjdk-amd64/bin/java 1111 auto modeġ /usr/lib/jvm/java-11-openjdk-amd64/bin/java 1111 manual mode

Running the above command displays a list of installed Java JDKs and JREs allowing you to select the one as you want to set as default. Now, let's run the following command to see all available Java versions: $ sudo update-alternatives -config java OpenJDK 64-Bit Server VM (build 25.191-b12, mixed mode )Īs you can see above, the default Java version is currently set to OpenJDK JRE 1.8. First of all, run the following command to check the current Java version: $ java -version In this tutorial, I'll explain how to change the default Java version on a Linux machine. But the good thing is you can install multiple Java versions on your machine and quickly change the default JRE version. Now, if you run this program on a Linux machine where an unsupported Java version is installed, you will encounter an exception.įor example, if your program is compiled on Java 11, it can't be run on a machine where Java 8 is installed.

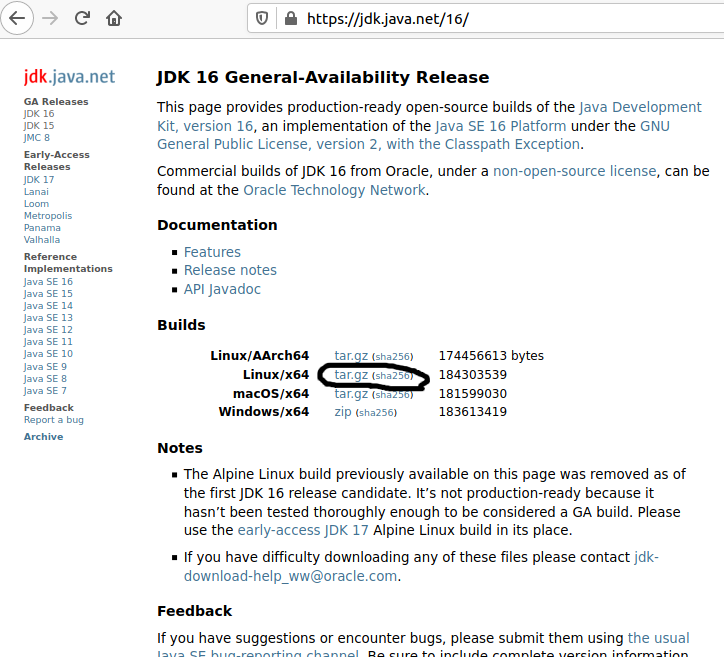

When a Java program is compiled, the build environment sets the oldest JRE version the program can support. bashrc file again in the terminal by source ~/.bashrcįinally, if you execute the below command it will launch Spark Shell.If you are a Java developer, it is normal to have multiple Java versions installed on your machine to support different build environments. This step is only meant if you have installed in “Manual Way” vim ~/.bashrcĪdd the following at the end, export SPARK_HOME=~/Downloads/spark-2.4.3-bin-hadoop2.7Įxport PYTHONPATH=$SPARK_HOME/python:$PYTHONPATHĮxport PYSPARK_DRIVER_PYTHON_OPTS="notebook" Configure Environment Variables for Spark Note : If your spark file is of different version correct the name accordingly. Sudo tar -zxvf spark-2.4.3-bin-hadoop2.7.tgz Go to the directory where the spark zip file was downloaded and run the command to install it: cd Downloads Just execute the below command if you have Python and PIP already installed. This method is best for WSL (Windows Subsystem for Linux) Ubuntu: By now, if you run echo $JAVA_HOME you should get the expected output.

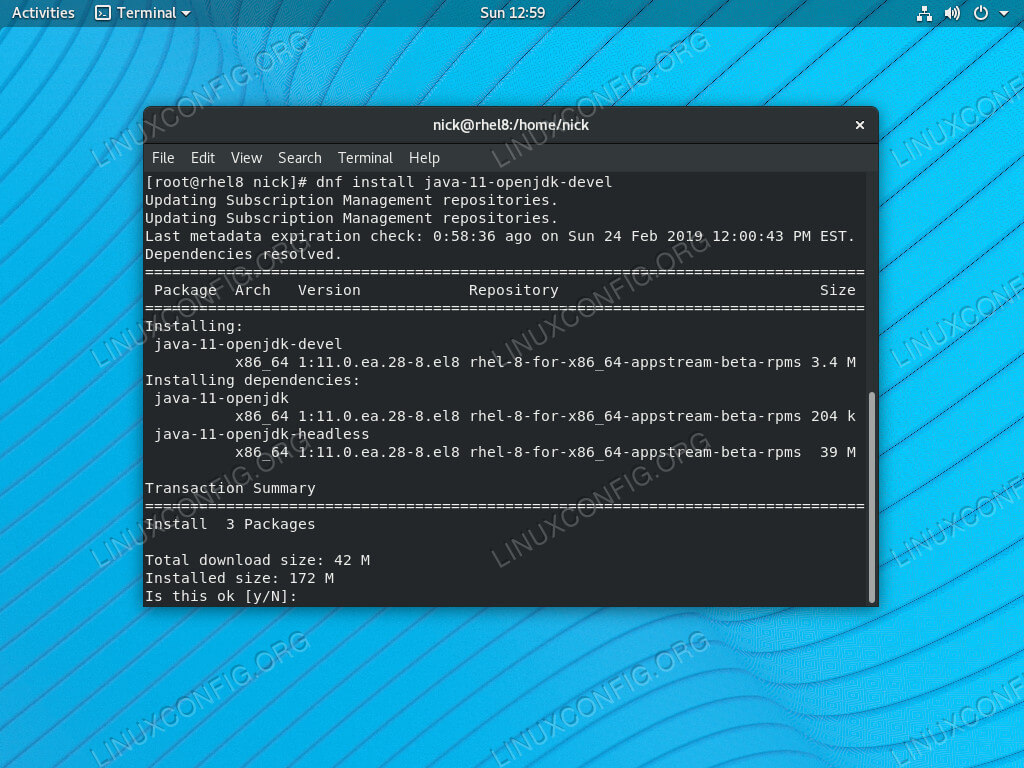

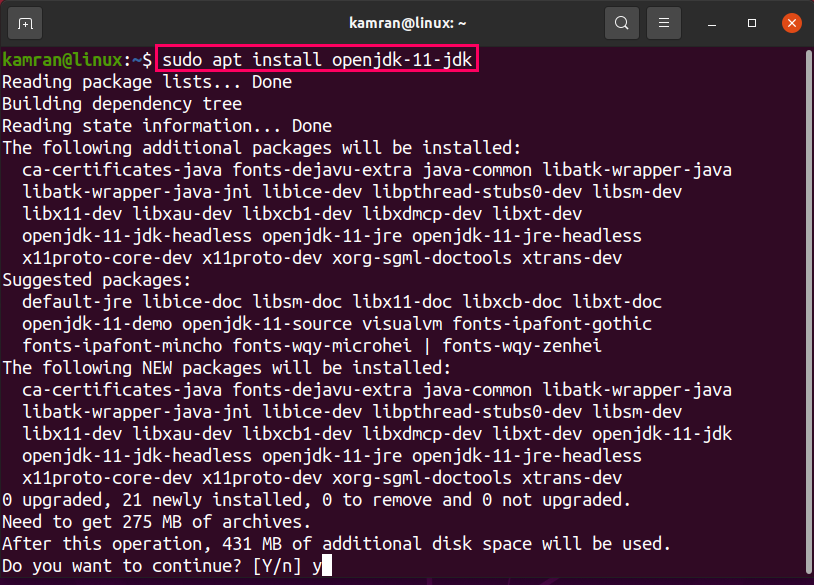

Or you can exit this terminal and create another. bashrc file in the terminal again by running the following command. We will add spark variables below it later. So run the following command in the terminal, vim ~/.bashrcįile opens. bashrc file is loaded to the terminal every time it’s opened. Now some versions of ubuntu do not run the /etc/environment file every time we open the terminal so it’s better to add it in the. The output should be: /usr/lib/jvm/java-8-openjdk-amd64 Later, in the terminal run source /etc/environmentĭon’t forget to run the last line in the terminal, as that will create the environment variable and load it in the currently running shell. Then, in a new line after the PATH variable add JAVA_HOME="/usr/lib/jvm/java-8-openjdk-amd64" Set the $JAVA_HOME Environment Variableįor this, run the following in the terminal: sudo vim /etc/environment I got it in my default downloads folder where I will install spark. Remember the directory where you downloaded it. OpenJDK 64-Bit Server VM (build 25.212-b03, mixed mode) If you don’t, run the following command in the terminal: sudo apt install openjdk-8-jdkĪfter in stallation, if you type the java -version in the terminal you will get: openjdk version "1.8.0_212" If you follow the steps, you should be able to install PySpark without any problem. My machine has ubuntu 18.04 and I am using Java 8 along with Anaconda3. We will install Java 8, Spark and configured all the environment variables. In this tutorial, we will see How to Install PySpark with JAVA 8 on Ubuntu 18.04?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed